The Model Is the Commodity. Microsoft Just Proved It.

Microsoft spent $13 billion investing in OpenAI. Then, in September 2025, it quietly added Anthropic’s Claude Sonnet 4 and Claude Opus 4.1 to Copilot Studio. By January 2026, Claude was enabled by default for most Microsoft 365 Copilot commercial tenants. By March 2026, Microsoft shipped a feature called Critique, in which GPT drafts a response and Claude reviews it for accuracy before the user ever sees it.

Two frontier models, one from a company Microsoft has backed to the tune of $13 billion, one from its direct rival, running in sequence inside a single enterprise product, under Microsoft’s brand, at Microsoft’s price point. And those are just the two making headlines. Azure AI Foundry already hosts models from Meta, Mistral, Cohere, Grok, and others.

The Claude add-on for Office is now better than the native Copilot. If that doesn’t tell you where the value in AI actually lives, nothing will.

What Microsoft Is Actually Doing Here

The framing from Microsoft’s communications is about “model choice” and “flexibility.” That’s the customer-facing story. The more interesting story is what the product architecture reveals about Microsoft’s actual strategy.

Copilot is not a model. It never was. Copilot is an application layer, a distribution channel, a UX shell, an enterprise integration, a compliance wrapper, and a brand. The model running underneath is a runtime dependency. Microsoft is now treating it exactly like that: a dependency it can swap, upgrade, or combine based on task performance, not loyalty to a specific provider.

When GitHub Copilot’s automatic model selection began primarily routing to Claude Sonnet 4 in VS Code, GitHub didn’t rename the product. Nobody at Microsoft had a crisis about the OpenAI partnership. The product kept its name, its pricing, and its user relationship. The model changed. Nobody noticed.

That’s the definition of a commodity: something interchangeable, where the choice of specific supplier doesn’t change the customer’s experience or the product’s value proposition.

The Critique Feature Makes the Argument Perfectly

The new Critique workflow in Copilot’s Researcher agent is the clearest illustration of this dynamic. GPT-4o generates a research response. Claude reviews it for accuracy, completeness, and citation quality before delivering it to the user. Microsoft says this multi-model pipeline produces a 13.8% improvement on the DRACO deep research benchmark, ahead of standalone tools from OpenAI, Google, Perplexity, and Anthropic itself.

Think about that for a second. Microsoft is outperforming Anthropic’s own research products by orchestrating Anthropic’s model inside its own application layer. The model is a tool. The orchestration, the UX, the data integration, the enterprise trust framework, that’s the product.

The end user sees one thing: Copilot. They don’t know which model ran. They don’t care. They’re paying $30/user/month to Microsoft, not to Anthropic, not to OpenAI. Microsoft captures the value. The model providers supply the capability.

This Is the Fundamental Economics of Platform vs. Component

This pattern has played out in software before. The database engine was once a strategic asset. Then cloud happened, and the database became a managed service from AWS, Azure, or GCP, i.e., a commodity abstracted behind an API, priced by consumption, swappable without the application knowing. The value moved up the stack to whoever owned the application layer, the distribution, and the customer relationship.

AI models are following the same trajectory, faster.

The raw intelligence, the ability to reason, generate text, write code, and summarize documents, is becoming abundant. Every frontier lab is closing the capability gap on the others. The delta between GPT-4o and Claude on most enterprise tasks is not zero, but it’s small enough that the decision of which model to use is now a task-level optimization, not an architecture-level commitment. Microsoft’s multi-model approach formalizes exactly this: a route to the best model for the task, not the preferred model for the relationship.

What does not commoditize as quickly:

- The distribution (Microsoft’s 450 million commercial Microsoft 365 users)

- The enterprise trust layer (compliance, data residency, audit controls, SLAs)

- The integrations (Teams, SharePoint, Outlook, Excel, Word, the actual workflows people live in)

- The brand (Copilot is what IT buyers approve; the model underneath is a footnote)

- The orchestration logic (the Critique pipeline, the Researcher agent, the Cowork delegation engine)

Microsoft has all of these. Anthropic and OpenAI are suppliers to the platform. The platform owns the customer.

What This Means for Anyone Building on AI

If you are building an AI product and your differentiation is “we use a better model,” you have a temporary advantage at best. The model capabilities you’re selling today will be available in every competing platform within months. Microsoft just demonstrated this in real time: Anthropic’s edge in deep reasoning and document synthesis is now a feature inside Copilot, accessible to every Microsoft 365 enterprise customer, wrapped in Microsoft’s compliance and security guarantees.

The durable advantage is not the model. It is:

- Domain-specific data that general models don’t have access to

- Workflows deeply integrated into where users already work

- Proprietary context, organizational data, historical patterns, user behavior, that makes the output more relevant than a general-purpose answer

- Trust earned through specific expertise, compliance posture, or audit defensibility in a regulated domain

This is why vertical AI applications, tools built for specific industries, specific workflows, specific data environments, are structurally more defensible than horizontal “better AI” plays. The model is a component. The application, the data integration, and the domain expertise are the product.

The LICENSEWARE Angle

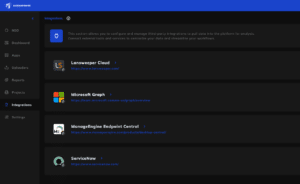

We build modern software asset management applications on top of AI infrastructure. The model we use to analyze IT data, to generate recommendations, to explain a Microsoft EA structure to a CFO who has never read a licensing policy, that’s a runtime decision, not a product identity.

Our value is not in using Claude, GPT, or any other model. Our value is that we understand Oracle’s Core Factor Table, the VMware soft-partitioning rule, the RHEL Virtual Datacenter subscription math, the Microsoft EA True-Up mechanics, and many other hard-coded rules that are not easily found or not available at all on the public web, and we’ve encoded that domain expertise into structured analysis workflows that non-specialists can actually use. The model is the engine. The expertise is the product.

Microsoft just spent several billion dollars validating that principle at global scale.

The companies that will win in enterprise AI are not the ones with the best model. They’re the ones who figured out the problem, understand the data, own the workflow, and trust the relationship, and happen to use a very good model underneath.